By Matthew B. Harrison

TALKERS, VP/Associate Publisher

Harrison Media Law, Senior Partner

Goodphone Communications, Executive Producer

The Problem Is No Longer Spotting a Joke. The Problem Is Spotting Reality

The Problem Is No Longer Spotting a Joke. The Problem Is Spotting Reality

Every seasoned broadcaster or media creator has a radar for nonsense. You have spent years vetting sources, confirming facts, and throwing out anything that feels unreliable. The complication now is that artificial intelligence can wrap unreliable content in a polished package that looks and sounds legitimate.

This article is not aimed at people creating AI impersonation channels. If that is your hobby, nothing here will make you feel more confident about it. This is for the professionals whose job is to keep the information stream as clean as possible. You are not making deepfakes. You are trying to avoid stepping in them and trying even harder not to amplify them.

Once something looks real and sounds real, a significant segment of your audience will assume it is real. That changes the amount of scrutiny you need to apply. The burden now falls on people like you to pause before reacting.

Two Clips That Tell the Whole Story

Consider two current examples. The first is the synthetic Biden speech that appears all over social media. It presents a younger, steadier president delivering remarks that many supporters wish he would make. It is polished, convincing, and created entirely by artificial intelligence.

The second is the cartoonish Trump fighter jet video that shows him dropping waste on unsuspecting civilians. No one believes it is real. Yet both types of content live in the same online ecosystem and both get shared widely.

The underlying facts do not matter once the clip begins circulating. If you repeat it on the air without checking it, you become the next link in the distribution chain. Not every untrue clip is misinformation. People get things wrong without intending to deceive, and the law recognizes that. What changes here is the plausibility. When an artificial performance can fool a reasonable viewer, the difference between a mistake and a misleading impression becomes something a finder of fact sorts out later. Your audience cannot make that distinction in real time.

Parody and Satire Still Exist, but AI Is Blurring the Edges

Parody imitates a person to comment on that person. Satire uses the imitation to comment on something else. These categories worked because traditional impersonations were obvious. A cartoon voice or exaggerated caricature did not fool anyone.

A convincing AI impersonation removes the cues that signal it is a joke. It sounds like the celebrity. It looks like the celebrity. It uses words that fit the celebrity’s public image. It stops functioning as commentary and becomes a manufactured performance that appears authentic. That is when broadcasters get pulled into the confusion even though they had nothing to do with the creation.

When the Fake Version Starts Crowding Out the Real One

Public figures choose when and where to speak. A Robert De Niro interview has weight because he rarely gives them. A carefully planned appearance on a respected platform signals importance.

When dozens of artificial De Niros begin posting daily commentary, the significance of the real appearance is reduced. The market becomes crowded. Authenticity becomes harder to protect. This is not only a reputational issue. It is an economic one rooted in scarcity and control.

You may think you are sharing a harmless clip. In reality, you might be participating in the dilution of someone’s legitimate business asset.

Disclaimers Are Not Shields

Many deepfake channels use disclaimers. They say things like this is parody or this is not the real person. A parking garage can also post a sign that it is not responsible for damage to your car. That does not absolve them when something collapses on your vehicle.

A disclaimer that no one negotiates or meaningfully acknowledges does not protect the creator or the people who share the clip. If viewers believe it is real, the disclaimer (often hidden in plain sight) is irrelevant.

The Liability No One Expects: Damage You Did Not Create

You can become responsible for the fallout without ever touching the original video. If you talk about a deepfake on the air, share it on social media, or frame it as something that might be true, you help it spread. Your audience trusts you. If you repeat something inaccurate, even unintentionally, they begin questioning your judgment. One believable deepfake can undermine years of credibility.

Platforms Profit From the Confusion

Here is the structural issue that rarely gets discussed. Platforms have every financial incentive to push deepfakes. They generate engagement. Engagement generates revenue. Revenue satisfies stockholders. This stands in tension with the spirit of Section 230, which was designed to protect neutral platforms, not platforms that amplify synthetic speech they know is likely to deceive.

If a platform has the ability to detect and label deepfakes and chooses not to, the responsibility shifts to you. The platform benefits. You absorb the risk.

What Media Professionals Should Do

You do not need new laws. You do not need to give warnings to your audience. You do not need to panic. You do need to stay sharp.

Here is the quick test. Ask yourself four questions.

Is the source authenticated?

Has the real person ever said anything similar?

Is the platform known for synthetic or poorly moderated content?

Does anything feel slightly off even when the clip looks perfect?

If any answer gives you pause, treat the clip as suspect. Treat it as content, not truth.

Final Thought (at Least for Now)

Artificial intelligence will only become more convincing. Your role is not to serve as a gatekeeper. Your role is to maintain professional judgment. When a clip sits between obviously fake and plausibly real, that is the moment to verify and, when necessary, seek guidance. There is little doubt that the inevitable proliferation of phony internet “shows” is about to bloom into a controversial legal, ethical, and financial industry issue.

Matthew B. Harrison is a media and intellectual property attorney who advises radio hosts, content creators, and creative entrepreneurs. He has written extensively on fair use, AI law, and the future of digital rights. Reach him at Matthew@HarrisonMediaLaw.com or read more at TALKERS.com.

Share this with your network

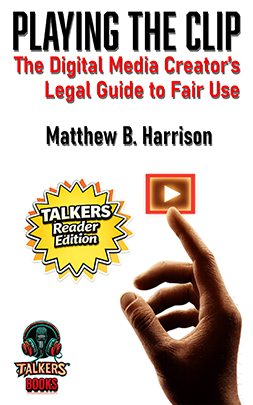

For years, Harrison Legal Group has informed media creators about the legal risks of using copyrighted clips, songs, images, and broadcasts without permission. The issue became central enough to inspire my book, Playing the Clip: The Definitive Digital Media Creator’s Guide to Fair Use (TALKERS Books, 2026). The premise was straightforward: modern media runs on borrowed material, but borrowing comes with legal exposure.

For years, Harrison Legal Group has informed media creators about the legal risks of using copyrighted clips, songs, images, and broadcasts without permission. The issue became central enough to inspire my book, Playing the Clip: The Definitive Digital Media Creator’s Guide to Fair Use (TALKERS Books, 2026). The premise was straightforward: modern media runs on borrowed material, but borrowing comes with legal exposure. TALKERS magazine associate publisher) Matthew B. Harrison, a work designed for today’s news/talk media environment where audio, video, screenshots, and quotes are not just supporting elements – but serve as the actual content itself. This technique has become particularly prevalent on YouTube and even cable news/talk TV but increasingly appears in audio form as what used to be called “actualities” – sound from another source.

TALKERS magazine associate publisher) Matthew B. Harrison, a work designed for today’s news/talk media environment where audio, video, screenshots, and quotes are not just supporting elements – but serve as the actual content itself. This technique has become particularly prevalent on YouTube and even cable news/talk TV but increasingly appears in audio form as what used to be called “actualities” – sound from another source. book explains the legal concept of fair use not as a permission structure, but as a legal defense raised after copying has already occurred – an uncomfortable but essential distinction that underpins the entire analysis.

book explains the legal concept of fair use not as a permission structure, but as a legal defense raised after copying has already occurred – an uncomfortable but essential distinction that underpins the entire analysis. Cumulus’s relationships with advertisers, goodwill, and market share, and push it towards financial collapse. The District Court accordingly enjoined Nielsen’s anticompetitive conduct.

Cumulus’s relationships with advertisers, goodwill, and market share, and push it towards financial collapse. The District Court accordingly enjoined Nielsen’s anticompetitive conduct.  The rise of independent, talk show-style political commentary on YouTube has created a new class of media actors who do not see themselves as broadcasters, journalists, or publishers. They see themselves as creators. That distinction is real in terms of identity, tone, and platform. It is not real where it matters most: liability.

The rise of independent, talk show-style political commentary on YouTube has created a new class of media actors who do not see themselves as broadcasters, journalists, or publishers. They see themselves as creators. That distinction is real in terms of identity, tone, and platform. It is not real where it matters most: liability. AI is now embedded in the modern newsroom. Not as a headline, not as a novelty, but as infrastructure. It drafts outlines, summarizes complex reporting, surfaces background details, and accelerates prep for live conversations. For media creators operating under relentless deadlines, that efficiency is not theoretical. It is practical and daily.

AI is now embedded in the modern newsroom. Not as a headline, not as a novelty, but as infrastructure. It drafts outlines, summarizes complex reporting, surfaces background details, and accelerates prep for live conversations. For media creators operating under relentless deadlines, that efficiency is not theoretical. It is practical and daily. TALKERS magazine, the leading trade publication serving America’s professional broadcast talk radio and associated digital communities since 1990, is pleased to participate as the presenting sponsor of the forthcoming Intercollegiate Broadcasting System (IBS) conference for the second consecutive year.

TALKERS magazine, the leading trade publication serving America’s professional broadcast talk radio and associated digital communities since 1990, is pleased to participate as the presenting sponsor of the forthcoming Intercollegiate Broadcasting System (IBS) conference for the second consecutive year.

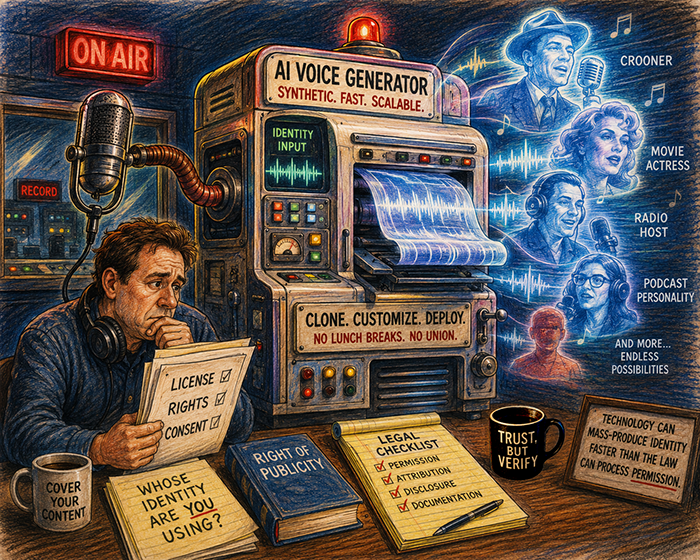

For years, “protect your name and likeness” sounded like lawyer advice in search of a problem. Abstract. Defensive. Easy to ignore. That worked when misuse required effort, intent, and a human decision-maker willing to cross a line.

For years, “protect your name and likeness” sounded like lawyer advice in search of a problem. Abstract. Defensive. Easy to ignore. That worked when misuse required effort, intent, and a human decision-maker willing to cross a line. Every media creator knows this moment. You are building a segment, you find the clip that makes the point land, and then the hesitation kicks in. Can I use this? Or am I about to invite a problem that distracts from the work itself?

Every media creator knows this moment. You are building a segment, you find the clip that makes the point land, and then the hesitation kicks in. Can I use this? Or am I about to invite a problem that distracts from the work itself? The Problem Is No Longer Spotting a Joke. The Problem Is Spotting Reality

The Problem Is No Longer Spotting a Joke. The Problem Is Spotting Reality

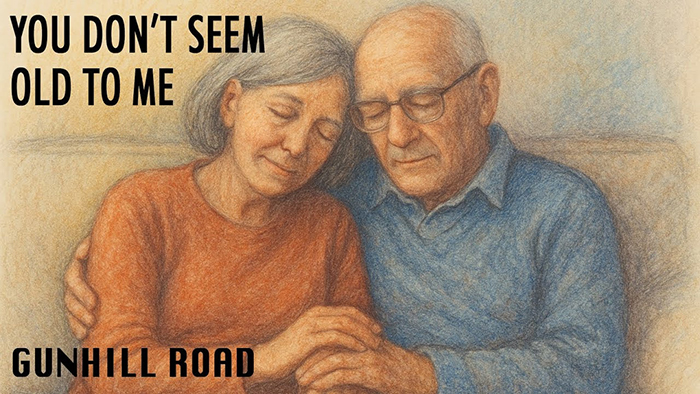

examining the lifelong love affair of a fictional couple from childhood to old age – an emotional roller coaster ride reflecting the romantic ups and downs of a complex relationship. The tear-jerker is a departure from the heavy-hitting social commentaries that have made Gunhill Road a favorite among talk radio hosts and audiences for the past half decade. The intriguing group, formed in the late 1960s, is still going strong with core members Steve Goldrich, Paul Reisch, Brian Koonin, and Michael Harrison. Matthew B. Harrison produces the ensemble’s videos that employ leading-edge techniques and technology. Ms. Farber, who shares lead vocals on the song with Brian Koonin, is a talented singer, songwriter, and instrumentalist with a number of singles, albums and television commercial soundtracks among her credits. She is presently an advocate for the well-being of nursing home residents and organizer of initiatives to bring live music into their lives.

examining the lifelong love affair of a fictional couple from childhood to old age – an emotional roller coaster ride reflecting the romantic ups and downs of a complex relationship. The tear-jerker is a departure from the heavy-hitting social commentaries that have made Gunhill Road a favorite among talk radio hosts and audiences for the past half decade. The intriguing group, formed in the late 1960s, is still going strong with core members Steve Goldrich, Paul Reisch, Brian Koonin, and Michael Harrison. Matthew B. Harrison produces the ensemble’s videos that employ leading-edge techniques and technology. Ms. Farber, who shares lead vocals on the song with Brian Koonin, is a talented singer, songwriter, and instrumentalist with a number of singles, albums and television commercial soundtracks among her credits. She is presently an advocate for the well-being of nursing home residents and organizer of initiatives to bring live music into their lives.

and the gut-wrenching chaos of informational overload. Non-partisan lyrics cry out: “Too much information clogging up my brain… and I can’t change the station; it’s driving me insane!” Co-written and performed by band members Steve Goldrich, Paul Reisch, Brian Koonin, and Michael Harrison, the dramatic images accompanying the music include a dynamic montage of exasperated people being driven to the brink of madness by the pressure of what feels like non-stop, negative NOISE. Produced by

and the gut-wrenching chaos of informational overload. Non-partisan lyrics cry out: “Too much information clogging up my brain… and I can’t change the station; it’s driving me insane!” Co-written and performed by band members Steve Goldrich, Paul Reisch, Brian Koonin, and Michael Harrison, the dramatic images accompanying the music include a dynamic montage of exasperated people being driven to the brink of madness by the pressure of what feels like non-stop, negative NOISE. Produced by  Every talk host knows the move: play the clip. It might be a moment from late-night TV, a political ad, or a viral post that sets the table for the segment. It’s how commentary comes alive – listeners hear it, react to it, and stay tuned for your take.

Every talk host knows the move: play the clip. It might be a moment from late-night TV, a political ad, or a viral post that sets the table for the segment. It’s how commentary comes alive – listeners hear it, react to it, and stay tuned for your take. When we first covered this case, it felt like only 2024 could invent it – a disgraced congressman, George Santos, selling Cameos and a late-night host, Jimmy Kimmel, buying them under fake names to make a point about truth and ego. A year later, the Second Circuit turned that punchline into precedent. (Read story here:

When we first covered this case, it felt like only 2024 could invent it – a disgraced congressman, George Santos, selling Cameos and a late-night host, Jimmy Kimmel, buying them under fake names to make a point about truth and ego. A year later, the Second Circuit turned that punchline into precedent. (Read story here:  Jimmy Kimmel’s first monologue back after the recent suspension had the audience laughing and gasping, and, in the hands of countless radio hosts and podcasters, replaying. Within hours, clips of his bit weren’t just being shared online. They were being chopped up, (re)framed, and (re)analyzed as if they were original show content. For listeners, that remix feels fresh. For lawyers, it is a fair use minefield.

Jimmy Kimmel’s first monologue back after the recent suspension had the audience laughing and gasping, and, in the hands of countless radio hosts and podcasters, replaying. Within hours, clips of his bit weren’t just being shared online. They were being chopped up, (re)framed, and (re)analyzed as if they were original show content. For listeners, that remix feels fresh. For lawyers, it is a fair use minefield. Charlie Kirk’s tragic assassination shook the talk radio world. Emotions were raw, and broadcasters across the spectrum tried to capture that moment for their audiences. Charles Heller of KVOI in Tucson

Charlie Kirk’s tragic assassination shook the talk radio world. Emotions were raw, and broadcasters across the spectrum tried to capture that moment for their audiences. Charles Heller of KVOI in Tucson  Superman just flew into court – not against Lex Luthor, but against Midjourney. Warner Bros. Discovery is suing the AI platform, accusing it of stealing the studio’s crown jewels: Superman, Batman, Wonder Woman, Scooby-Doo, Bugs Bunny, and more.

Superman just flew into court – not against Lex Luthor, but against Midjourney. Warner Bros. Discovery is suing the AI platform, accusing it of stealing the studio’s crown jewels: Superman, Batman, Wonder Woman, Scooby-Doo, Bugs Bunny, and more. Imagine an AI trained on millions of books – and a federal judge saying that’s fair use. That’s exactly what happened this summer in Bartz v. Anthropic, a case now shaping how creators, publishers, and tech giants fight over the limits of copyright.

Imagine an AI trained on millions of books – and a federal judge saying that’s fair use. That’s exactly what happened this summer in Bartz v. Anthropic, a case now shaping how creators, publishers, and tech giants fight over the limits of copyright. Ninety seconds. That’s all it took. One of the interviews on the TALKERS Media Channel – shot, edited, and published by us – appeared elsewhere online, chopped into jumpy cuts, overlaid with AI-generated video game clips, and slapped with a clickbait title. The credit? A link. The essence of the interview? Repurposed for someone else’s traffic.

Ninety seconds. That’s all it took. One of the interviews on the TALKERS Media Channel – shot, edited, and published by us – appeared elsewhere online, chopped into jumpy cuts, overlaid with AI-generated video game clips, and slapped with a clickbait title. The credit? A link. The essence of the interview? Repurposed for someone else’s traffic. Imagine a listener “talking” to an AI version of you – trained entirely on your old episodes. The bot knows your cadence, your phrases, even your voice. It sounds like you, but it isn’t you.

Imagine a listener “talking” to an AI version of you – trained entirely on your old episodes. The bot knows your cadence, your phrases, even your voice. It sounds like you, but it isn’t you.

Imagine SiriusXM acquires the complete Howard Stern archive – every show, interview, and on-air moment. Months later, it debuts “Howard Stern: The AI Sessions,” a series of new segments created with artificial intelligence trained on that archive. The programming is labeled AI-generated, yet the voice, timing, and style sound like Stern himself.

Imagine SiriusXM acquires the complete Howard Stern archive – every show, interview, and on-air moment. Months later, it debuts “Howard Stern: The AI Sessions,” a series of new segments created with artificial intelligence trained on that archive. The programming is labeled AI-generated, yet the voice, timing, and style sound like Stern himself. You did everything right – or so you thought. You used a short clip, added commentary, or reshared something everyone else was already posting. Then one day, a notice shows up in your inbox. A takedown. A demand. A legal-sounding, nasty-toned email claiming copyright infringement, and asking for payment.

You did everything right – or so you thought. You used a short clip, added commentary, or reshared something everyone else was already posting. Then one day, a notice shows up in your inbox. A takedown. A demand. A legal-sounding, nasty-toned email claiming copyright infringement, and asking for payment. Mark Walters

Mark Walters

In radio and podcasting, editing isn’t just technical – it shapes narratives and influences audiences. Whether trimming dead air, tightening a guest’s comment, or pulling a clip for social media, every cut leaves an impression.

In radio and podcasting, editing isn’t just technical – it shapes narratives and influences audiences. Whether trimming dead air, tightening a guest’s comment, or pulling a clip for social media, every cut leaves an impression. Let’s discuss how CBS’s $16 million settlement became a warning shot for every talk host, editor, and content creator with a mic.

Let’s discuss how CBS’s $16 million settlement became a warning shot for every talk host, editor, and content creator with a mic. In early 2024, voters in New Hampshire got strange robocalls. The voice sounded just like President Joe Biden, telling people not to vote in the primary. But it wasn’t him. It was an AI clone of his voice – sent out to confuse voters.

In early 2024, voters in New Hampshire got strange robocalls. The voice sounded just like President Joe Biden, telling people not to vote in the primary. But it wasn’t him. It was an AI clone of his voice – sent out to confuse voters. When Georgia-based nationally syndicated radio personality, and Second Amendment advocate Mark Walters (longtime host of “Armed American Radio”) learned that ChatGPT had falsely claimed he was involved in a criminal embezzlement scheme, he did what few in the media world have dared to do. Walters stood up when others were silent, and took on an incredibly powerful tech company, one of the biggest in the world, in a court of law.

When Georgia-based nationally syndicated radio personality, and Second Amendment advocate Mark Walters (longtime host of “Armed American Radio”) learned that ChatGPT had falsely claimed he was involved in a criminal embezzlement scheme, he did what few in the media world have dared to do. Walters stood up when others were silent, and took on an incredibly powerful tech company, one of the biggest in the world, in a court of law. In a ruling that should catch the attention of every talk host and media creator dabbling in AI, a Georgia court has dismissed “Armed American Radio” syndicated host Mark Walters’ defamation lawsuit against OpenAI. The case revolved around a disturbing but increasingly common glitch: a chatbot “hallucinating” canonically false but believable information.

In a ruling that should catch the attention of every talk host and media creator dabbling in AI, a Georgia court has dismissed “Armed American Radio” syndicated host Mark Walters’ defamation lawsuit against OpenAI. The case revolved around a disturbing but increasingly common glitch: a chatbot “hallucinating” canonically false but believable information.

In the ever-evolving landscape of digital media, creators often walk a fine line between inspiration and infringement. The 2015 case of “Equals Three, LLC v. Jukin Media, Inc.” offers a cautionary tale for anyone producing reaction videos or commentary-based content: fair use is not a free pass, and transformation is key.

In the ever-evolving landscape of digital media, creators often walk a fine line between inspiration and infringement. The 2015 case of “Equals Three, LLC v. Jukin Media, Inc.” offers a cautionary tale for anyone producing reaction videos or commentary-based content: fair use is not a free pass, and transformation is key. As the practice of “clip jockeying” becomes an increasingly ubiquitous and taken-for-granted technique in modern audio and video talk media, an understanding of the legal concept “fair use” is vital to the safety and survival of practitioners and their platforms.

As the practice of “clip jockeying” becomes an increasingly ubiquitous and taken-for-granted technique in modern audio and video talk media, an understanding of the legal concept “fair use” is vital to the safety and survival of practitioners and their platforms.