When Your Voice Becomes the Product

By Matthew B. Harrison

TALKERS, VP/Associate Publisher

Harrison Legal Group, Senior Partner

Goodphone Communications, Executive Producer

For years, Harrison Legal Group has informed media creators about the legal risks of using copyrighted clips, songs, images, and broadcasts without permission. The issue became central enough to inspire my book, Playing the Clip: The Definitive Digital Media Creator’s Guide to Fair Use (TALKERS Books, 2026). The premise was straightforward: modern media runs on borrowed material, but borrowing comes with legal exposure.

For years, Harrison Legal Group has informed media creators about the legal risks of using copyrighted clips, songs, images, and broadcasts without permission. The issue became central enough to inspire my book, Playing the Clip: The Definitive Digital Media Creator’s Guide to Fair Use (TALKERS Books, 2026). The premise was straightforward: modern media runs on borrowed material, but borrowing comes with legal exposure.

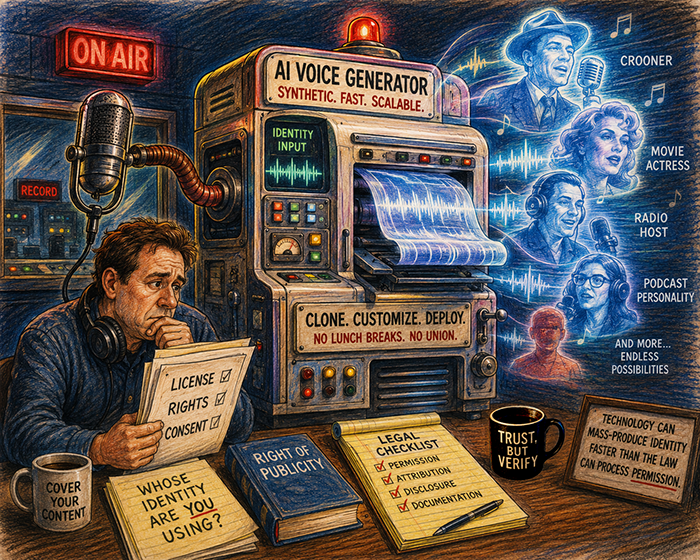

Now the fight is shifting toward something more personal.

The voice itself.

Not the recording. Not necessarily the script. The identity embedded in the sound.

That distinction is becoming increasingly important as AI voice systems improve to the point where listeners can recognize a performer even when the company insists it used a “different actor” or synthetic generation. The Scarlett Johansson dispute with OpenAI may become the defining example. Johansson alleged that OpenAI created a voice assistant that sounded “eerily similar” to her after she declined the company’s request to license her actual voice. OpenAI denied intentionally imitating her and stated the voice belonged to another actress but still paused what they branded the “Sky” voice after backlash intensified.

The case matters because it exposes a legal gray area many creators misunderstand.

A voice is generally not protected by copyright law in the same way a song recording is. But a recognizable voice may still trigger claims involving the right of publicity, false endorsement, unfair competition, or misappropriation of identity. In other words, the legal risk is often not “you copied audio.” The risk is “you exploited identity.”

That distinction matters for broadcasters, podcasters, advertisers, and AI companies experimenting with synthetic hosts, cloned announcers, or celebrity-style narration.

If listeners reasonably believe a celebrity endorsed, participated in, or authorized the content, the legal exposure changes dramatically.

Read more….

Another recent example involves Dua Lipa and Samsung. According to reports, Lipa alleges Samsung used her image on television packaging without authorization, creating the impression she endorsed the product. Samsung reportedly claims the image came from a third-party provider that assured the company all rights were cleared.

That defense may sound familiar to media professionals.

“We got it from somebody else.”

Legally, that is often not enough.

A broadcaster cannot avoid defamation liability merely because a guest made the statement. A publisher cannot automatically avoid infringement exposure because a freelancer supplied the material. And a company may not avoid publicity-rights claims simply because a vendor promised the paperwork existed.

The underlying legal theme is the same: delegation is not immunity.

The AI layer complicates things further because modern systems do not necessarily reproduce exact copies. Instead, they generate approximations that may still evoke a specific person strongly enough to create marketplace confusion.

Courts have dealt with similar issues before. Bette Midler and Tom Waits both successfully sued over soundalike performances used in advertising after declining to participate themselves. The principle is not new. AI simply makes imitation faster, cheaper, and easier to distribute.

That should concern media creators who assume these disputes only affect billion-dollar tech companies.

They do not.

A local station, podcast producer, YouTube creator, or advertiser can now generate celebrity-adjacent voices in seconds. The barrier to entry collapsed. The liability did not.

The safest question is no longer merely “Do we own the audio?”

It is: “Whose identity does this remind people of?”

That answer may determine whether the next lawsuit is really about technology at all.

Or simply old-fashioned commercial exploitation wearing futuristic clothing.

Get your copy of “Play the Clip: The Definitive Digital Media Creator’s Guide to Fair Use” by filling out the request form at HarrisonMediaLaw.com.

Matthew B. Harrison is a media and intellectual property attorney who advises radio hosts, content creators, and creative entrepreneurs. He has written extensively on fair use, AI law, and the future of digital rights. Reach him at Matthew@HarrisonLegalGroup.com or read more at TALKERS.com.