By Matthew B. Harrison

TALKERS, VP/Associate Publisher

Harrison Media Law, Senior Partner

Goodphone Communications, Executive Producer

AI is now embedded in the modern newsroom. Not as a headline, not as a novelty, but as infrastructure. It drafts outlines, summarizes complex reporting, surfaces background details, and accelerates prep for live conversations. For media creators operating under relentless deadlines, that efficiency is not theoretical. It is practical and daily.

AI is now embedded in the modern newsroom. Not as a headline, not as a novelty, but as infrastructure. It drafts outlines, summarizes complex reporting, surfaces background details, and accelerates prep for live conversations. For media creators operating under relentless deadlines, that efficiency is not theoretical. It is practical and daily.

That reality raises a quiet but consequential legal question. When AI contributes to your research, what does verification now require?

Professional hosts are not reading raw chatbot answers on air and calling it journalism. That caricature misses the real issue. What is actually happening is subtler and far more common.

AI now sits inside research workflows. Producers use it for background. Hosts use it to summarize reporting. Teams use it to outline controversies or draft rundowns. Most of the time, it works. Sometimes, however, it invents.

When that invention involves a real person and a serious allegation, the legal analysis looks familiar.

For public figures, defamation requires proof of actual malice – knowledge of falsity or reckless disregard for truth. For private figures, negligence is usually enough. In both cases, the focus is not on the tool. It is on the content creator’s conduct.

AI does not change the elements. It changes the context in which reasonableness is judged.

Courts have long held that repeating a defamatory statement can create liability, even if someone else said it first. If you rely on a blog, and that blog relied on AI, and the allegation is false, the question becomes whether your reliance was reasonable.

Was the source reputable? Was the claim inherently improbable? Were there obvious red flags? Was contradictory information readily available?

AI’s reputation for “hallucinating” facts now forms part of that backdrop. Widespread awareness that these systems can fabricate citations, merge identities, or invent accusations becomes relevant when a court evaluates your verification choices.

This does not mean using AI indicates reckless disregard. It means using AI does not excuse skipping verification when the stakes are high.

The more specific and damaging the claim, the greater the duty to confirm it through independent, reliable sources. Not another prompt. Not a circular reference to the same unverified blog. Rather, a primary record, official statement, or established reporting.

Documentation matters. If challenged, being able to show that you checked multiple sources before broadcast can be decisive.

None of this is new doctrine. What is new is how seamlessly AI blends into ordinary research habits. That integration makes it easier to forget that the legal question is still about human judgment.

The law will not ask whether your workflow was efficient. It will ask whether your conduct was reasonable under the circumstances.

In the age of AI, verification is not a courtesy. It is risk management.

Matthew B. Harrison is a media and intellectual property attorney who advises radio hosts, content creators, and creative entrepreneurs. He has written extensively on fair use, AI law, and the future of digital rights. Reach him at Matthew@HarrisonMediaLaw.com or read more at TALKERS.com.

Share this with your network

Leader of Minnesota. We will be greeted as liberators. Death to fraud investigations!” Via social media, O’Donnell posted: “I want to take a moment to offer my sincerest apologies for a post I made about Minnesota’s Governor that, quite frankly, I am deeply ashamed of. It was irresponsible and completely inappropriate and I have since taken it down. It served only to deepen divisions at a time when unity and basic human decency are most needed. My primary responsibility as a broadcaster, a father, and a person is to always comport myself professionally, appropriately, and compassionately, and I failed to do that. Again, I am truly, deeply, unequivocally sorry.”

Leader of Minnesota. We will be greeted as liberators. Death to fraud investigations!” Via social media, O’Donnell posted: “I want to take a moment to offer my sincerest apologies for a post I made about Minnesota’s Governor that, quite frankly, I am deeply ashamed of. It was irresponsible and completely inappropriate and I have since taken it down. It served only to deepen divisions at a time when unity and basic human decency are most needed. My primary responsibility as a broadcaster, a father, and a person is to always comport myself professionally, appropriately, and compassionately, and I failed to do that. Again, I am truly, deeply, unequivocally sorry.”  out. The power-packed, one-day agenda is being organized and designed to address the field of talk media’s most pressing and existential issues. TALKERS publisher Michael Harrison states, “This important conference will illuminate the forward path of the expanding talk media universe, including all aspects of digital communications from AI and podcasting to streaming networks. As has been its tradition, this latest TALKERS conference will approach the onrushing future of the talk business from a radio perspective. This crucial gathering will cover the new undeniable realities of the radio business for those who not only want to survive but thrive as well. It will be about opportunities, networking, and entrepreneurism for individuals in talent, programming, sales, marketing, and management who are serious about staying in the game.”

out. The power-packed, one-day agenda is being organized and designed to address the field of talk media’s most pressing and existential issues. TALKERS publisher Michael Harrison states, “This important conference will illuminate the forward path of the expanding talk media universe, including all aspects of digital communications from AI and podcasting to streaming networks. As has been its tradition, this latest TALKERS conference will approach the onrushing future of the talk business from a radio perspective. This crucial gathering will cover the new undeniable realities of the radio business for those who not only want to survive but thrive as well. It will be about opportunities, networking, and entrepreneurism for individuals in talent, programming, sales, marketing, and management who are serious about staying in the game.” News/talk, sports talk, all-news, and general talk will be amply covered. There will be over 50 top industry speakers, and registration is limited to insure intimacy. Attendance at the conference is only open to members of the working media and directly associated industries as well as students enrolled in accredited learning institutions. All attendees will be required to register in advance on the phone payable by credit card. Because attendance will be limited, the conference is again expected to be an early sellout. The all-inclusive registration fee covering convention events, exhibits, food, and services for the day is $260. However, attendees can take advantage of the early bird fee of $150 available until 5:00 pm ET on Friday, April 3. All registrations are non-refundable. This power-packed, one-day event is being presented in association with Hofstra’s multi-award-winning station, WRHU Radio and the school’s Lawrence Herbert School of Communication.

News/talk, sports talk, all-news, and general talk will be amply covered. There will be over 50 top industry speakers, and registration is limited to insure intimacy. Attendance at the conference is only open to members of the working media and directly associated industries as well as students enrolled in accredited learning institutions. All attendees will be required to register in advance on the phone payable by credit card. Because attendance will be limited, the conference is again expected to be an early sellout. The all-inclusive registration fee covering convention events, exhibits, food, and services for the day is $260. However, attendees can take advantage of the early bird fee of $150 available until 5:00 pm ET on Friday, April 3. All registrations are non-refundable. This power-packed, one-day event is being presented in association with Hofstra’s multi-award-winning station, WRHU Radio and the school’s Lawrence Herbert School of Communication. Management’s Michael Del Nin in 2022 and began working together “to try to acquiring Cox Radio, with Del Nin agreeing that Warshaw would manage the business as CEO upon successful acquisition.” While both parties were doing due diligence on the CMG deal, Warshaw learned that an Audacy majority stake holder was willing to sell its stake in the company. Warshaw says he steered SFM and Del Nin to the deal that made SFM a majority stake holder of the new Audacy in early 2024. Warshaw alleges he was promised he’d be the next CEO of Audacy or that he would get 5% of SFM’s profits from the Audacy acquisition. Later, SFM filed a motion to strike arguing that talks between Del Nin and Warshaw did not rise to the level of an employment offer. In his recent filing with the court, Warshaw says SFM reads “the Complaint in the light least favorable to Plaintiff. And they introduce new facts and make factual arguments that must be left for resolution by a jury at trial. Even so, based on the Complaint’s detailed allegations, Defendants’ arguments fall apart. Defendants ask the Court to believe that Jeffrey Warshaw, a veteran executive and dealmaker in radio, attempted to ‘cozy up’ to Defendants, newcomers to radio. But why did they seek an introduction to Warshaw in the first place? Why did they need Warshaw to source the Audacy transaction, and quickly ask him to introduce them to Audacy’s controlling debtholder? Why did Michael Del Nin call Warshaw 107 times between October 2023 and October 2024? On breach of contract, Defendants argue that the Complaint does not plead definite and certain terms of the contract between Warshaw and SFM. That ignores the definiteness of the contract terms alleged, as well as controlling precedent holding that an oral agreement is enforceable so long as missing terms can be ascertained by fair implication or industry custom. Defendants also downplay the value of Warshaw’s sourcing of the Audacy deal and his introduction of Defendants to the firm holding a controlling interest in Audacy debt.”

Management’s Michael Del Nin in 2022 and began working together “to try to acquiring Cox Radio, with Del Nin agreeing that Warshaw would manage the business as CEO upon successful acquisition.” While both parties were doing due diligence on the CMG deal, Warshaw learned that an Audacy majority stake holder was willing to sell its stake in the company. Warshaw says he steered SFM and Del Nin to the deal that made SFM a majority stake holder of the new Audacy in early 2024. Warshaw alleges he was promised he’d be the next CEO of Audacy or that he would get 5% of SFM’s profits from the Audacy acquisition. Later, SFM filed a motion to strike arguing that talks between Del Nin and Warshaw did not rise to the level of an employment offer. In his recent filing with the court, Warshaw says SFM reads “the Complaint in the light least favorable to Plaintiff. And they introduce new facts and make factual arguments that must be left for resolution by a jury at trial. Even so, based on the Complaint’s detailed allegations, Defendants’ arguments fall apart. Defendants ask the Court to believe that Jeffrey Warshaw, a veteran executive and dealmaker in radio, attempted to ‘cozy up’ to Defendants, newcomers to radio. But why did they seek an introduction to Warshaw in the first place? Why did they need Warshaw to source the Audacy transaction, and quickly ask him to introduce them to Audacy’s controlling debtholder? Why did Michael Del Nin call Warshaw 107 times between October 2023 and October 2024? On breach of contract, Defendants argue that the Complaint does not plead definite and certain terms of the contract between Warshaw and SFM. That ignores the definiteness of the contract terms alleged, as well as controlling precedent holding that an oral agreement is enforceable so long as missing terms can be ascertained by fair implication or industry custom. Defendants also downplay the value of Warshaw’s sourcing of the Audacy deal and his introduction of Defendants to the firm holding a controlling interest in Audacy debt.” field with global technology platforms.” He underscored broadcasters’ unique and essential role in public safety, civic engagement and strengthening local democracy. Additionally, broadcasters heard from key policymakers shaping broadcast policy, including Sen. Ed Markey (D-MA), who spoke about the enduring value of broadcast radio and his leadership on the bipartisan effort to pass the AM Radio for Every Vehicle Act… and U.S. Rep. Richard Hudson (R-NC), chairman of the House Energy and Commerce Subcommittee on Communications and Technology, who spoke about the need for broadcast ownership rules to reflect today’s landscape and the importance of keeping AM radio in cars.

field with global technology platforms.” He underscored broadcasters’ unique and essential role in public safety, civic engagement and strengthening local democracy. Additionally, broadcasters heard from key policymakers shaping broadcast policy, including Sen. Ed Markey (D-MA), who spoke about the enduring value of broadcast radio and his leadership on the bipartisan effort to pass the AM Radio for Every Vehicle Act… and U.S. Rep. Richard Hudson (R-NC), chairman of the House Energy and Commerce Subcommittee on Communications and Technology, who spoke about the need for broadcast ownership rules to reflect today’s landscape and the importance of keeping AM radio in cars. Radio Networks names two media sales pros to the organization. Rosanne Tipton joins Key-United as VP, sales and brand partnerships, and

Radio Networks names two media sales pros to the organization. Rosanne Tipton joins Key-United as VP, sales and brand partnerships, and  Robbie Eisen, joins as director of strategic research and planning. Key-United president of sales Ron Russo, says, “We’re absolutely thrilled to welcome both Rosanne and Robbie to the sales organization. Their energy, drive, and passion for building meaningful relationships perfectly align with our commitment to service and partnership. We’re confident they will make an immediate impact and accelerate the outstanding results our agency and client partners have come to expect from Key-United.”

Robbie Eisen, joins as director of strategic research and planning. Key-United president of sales Ron Russo, says, “We’re absolutely thrilled to welcome both Rosanne and Robbie to the sales organization. Their energy, drive, and passion for building meaningful relationships perfectly align with our commitment to service and partnership. We’re confident they will make an immediate impact and accelerate the outstanding results our agency and client partners have come to expect from Key-United.” breathe talk radio? We’re looking for a program director to lead our talk radio stations to the next level.

breathe talk radio? We’re looking for a program director to lead our talk radio stations to the next level. revenue increased $47.7 million, or 14.1%, driven primarily by continuing increases in demand for digital and podcast advertising, as well as increased non-cash trade revenue resulting from strategic marketing initiatives. Multiplatform Group revenue decreased $19.2 million, or 2.8%, primarily resulting from lower political revenues, as 2024 was a presidential election year, as well as a decrease in broadcast advertising in connection with continued uncertain market conditions.

revenue increased $47.7 million, or 14.1%, driven primarily by continuing increases in demand for digital and podcast advertising, as well as increased non-cash trade revenue resulting from strategic marketing initiatives. Multiplatform Group revenue decreased $19.2 million, or 2.8%, primarily resulting from lower political revenues, as 2024 was a presidential election year, as well as a decrease in broadcast advertising in connection with continued uncertain market conditions. AI is now embedded in the modern newsroom. Not as a headline, not as a novelty, but as infrastructure. It drafts outlines, summarizes complex reporting, surfaces background details, and accelerates prep for live conversations. For media creators operating under relentless deadlines, that efficiency is not theoretical. It is practical and daily.

AI is now embedded in the modern newsroom. Not as a headline, not as a novelty, but as infrastructure. It drafts outlines, summarizes complex reporting, surfaces background details, and accelerates prep for live conversations. For media creators operating under relentless deadlines, that efficiency is not theoretical. It is practical and daily. Tuesday, March 10 and culminating with the championship game broadcast on Sunday, March 15. “We cannot wait for tip-off,” says Robert Blum, general manager of Compass Media Networks/Sports. “With the expansion of the Big Ten Tournament the six days will be packed with extremely compelling games that will help power the ratings of our affiliates and dazzle our audience.”

Tuesday, March 10 and culminating with the championship game broadcast on Sunday, March 15. “We cannot wait for tip-off,” says Robert Blum, general manager of Compass Media Networks/Sports. “With the expansion of the Big Ten Tournament the six days will be packed with extremely compelling games that will help power the ratings of our affiliates and dazzle our audience.”

Your prospect – or, worse, an existing advertiser with cold feet – says “We tried radio. It didn’t work.” Often, the copy is the culprit, because it’s inside-out.

Your prospect – or, worse, an existing advertiser with cold feet – says “We tried radio. It didn’t work.” Often, the copy is the culprit, because it’s inside-out. provides important benchmark measures for usage and behavior around streaming audio, podcasting, radio, smart audio, social media, and debuting this year, never-before-seen data on AI usage. Annual results and trending data from The Infinite Dial are relied upon by its audience of content producers, media companies, agencies, and the financial community.” The webinar will feature Edison vice president of research Megan Lazovick with special guest James Cridland, editor of Podnews.

provides important benchmark measures for usage and behavior around streaming audio, podcasting, radio, smart audio, social media, and debuting this year, never-before-seen data on AI usage. Annual results and trending data from The Infinite Dial are relied upon by its audience of content producers, media companies, agencies, and the financial community.” The webinar will feature Edison vice president of research Megan Lazovick with special guest James Cridland, editor of Podnews. eight stations in Minnesota. The second deal transfers 17 signals in a number of small markets in the state of Missouri to Carter Media Too, LLC., a Carrollton, Missouri‑based limited liability company led by the Carter Media family. Carter Media Too also operates three radio stations in Missouri plus the streaming platform, MidVid.com. Exclusive broker Kalil & Co says Miles and Michael Carter expressed their enthusiasm for expanding the organization’s footprint and restoring a strong local presence in news, weather, sports, and agriculture.

eight stations in Minnesota. The second deal transfers 17 signals in a number of small markets in the state of Missouri to Carter Media Too, LLC., a Carrollton, Missouri‑based limited liability company led by the Carter Media family. Carter Media Too also operates three radio stations in Missouri plus the streaming platform, MidVid.com. Exclusive broker Kalil & Co says Miles and Michael Carter expressed their enthusiasm for expanding the organization’s footprint and restoring a strong local presence in news, weather, sports, and agriculture.

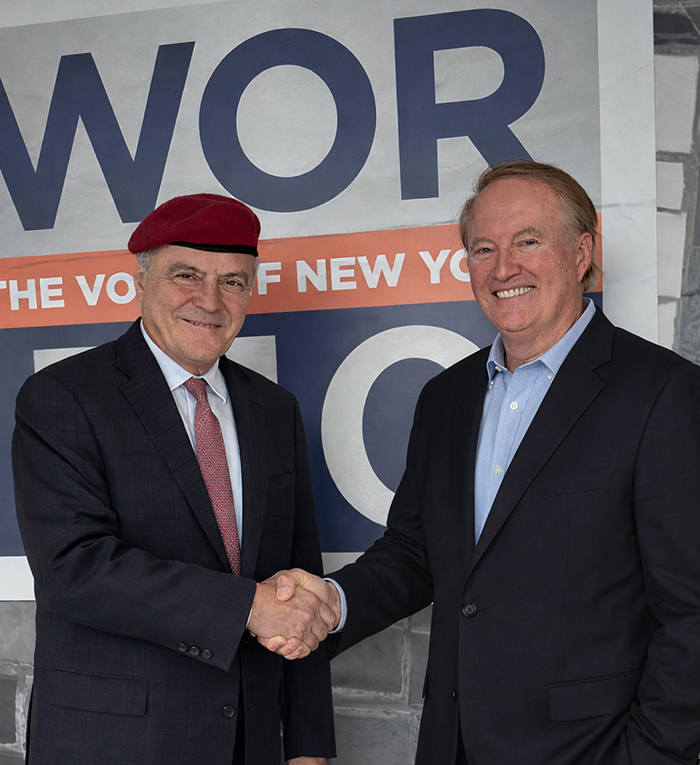

Station owner John Catsimatidis says, “Big name personalities define WABC Radio. Sean is a powerful addition to our Sunday lineup and another example of WABC’s unmatched ability to attract major talent and deliver must-hear talk. The show is going to be fast, fearless, and honest, with smart conversation, sharp opinion, and honest discussion about the stories driving the country.” Spicer comments, “WABC Radio doesn’t whisper, it leads! It is one of the most iconic and influential radio stations in the U.S. WABC Radio listeners expect truth, energy, and authenticity, and that’s exactly what I’m going to give them. I couldn’t be more excited to join the incredible 77WABC lineup.”

Station owner John Catsimatidis says, “Big name personalities define WABC Radio. Sean is a powerful addition to our Sunday lineup and another example of WABC’s unmatched ability to attract major talent and deliver must-hear talk. The show is going to be fast, fearless, and honest, with smart conversation, sharp opinion, and honest discussion about the stories driving the country.” Spicer comments, “WABC Radio doesn’t whisper, it leads! It is one of the most iconic and influential radio stations in the U.S. WABC Radio listeners expect truth, energy, and authenticity, and that’s exactly what I’m going to give them. I couldn’t be more excited to join the incredible 77WABC lineup.” They wanted WOW but those calls were taken. Still the station was known as WOW and boasted that Johnny Carson started his broadcast career there. The station took the calls KXSP in 2005 and was broadcasting a sports talk format using ESPN content. SummitMedia sold the station’s tower land, prompting the station’s sign-off.

They wanted WOW but those calls were taken. Still the station was known as WOW and boasted that Johnny Carson started his broadcast career there. The station took the calls KXSP in 2005 and was broadcasting a sports talk format using ESPN content. SummitMedia sold the station’s tower land, prompting the station’s sign-off.

strength of the video podcast and podcast clips, while “The Joe Rogan Experience” is #2 with consumption split almost evenly between audio and a combination of video podcast and podcast clips. Perhaps not surprisingly, NPR’s “NPR News Now” (#4) consumption came primarily from audio with about 20% coming from podcast clips. Candace Owens’ “Candace” leapt 71 places to finish the January period at #10. Her program’s consumption was primarily a split between video podcast and podcast clips.

strength of the video podcast and podcast clips, while “The Joe Rogan Experience” is #2 with consumption split almost evenly between audio and a combination of video podcast and podcast clips. Perhaps not surprisingly, NPR’s “NPR News Now” (#4) consumption came primarily from audio with about 20% coming from podcast clips. Candace Owens’ “Candace” leapt 71 places to finish the January period at #10. Her program’s consumption was primarily a split between video podcast and podcast clips.  posts remain unchanged – iHeart Audience Networks’ “Stuff You Should Know” at #1 and Audacy Podcast Network’s “48 Hours.” Salem Podcast Network’s “The Charlie Kirk Show” falls only one spot to #4. Other noteworthy shows include iHeart Audience Network’s “The Clay Travis and Buck Sexton Show” rises on place to #10 and Audioboom’s “The Bulwark Takes” climbs nine places to #21.

posts remain unchanged – iHeart Audience Networks’ “Stuff You Should Know” at #1 and Audacy Podcast Network’s “48 Hours.” Salem Podcast Network’s “The Charlie Kirk Show” falls only one spot to #4. Other noteworthy shows include iHeart Audience Network’s “The Clay Travis and Buck Sexton Show” rises on place to #10 and Audioboom’s “The Bulwark Takes” climbs nine places to #21.

Colin Cowherd” to the program lineup at WJBR-AM, Tampa “Florida Alumni Radio 1010AM” in the 112:00 noon to 3:00 pm daypart. The program will also be simulcast on 92.1 FM in Hillsborough County, 103.1 FM in Pinellas County, and 104.7 HD2. The show will air weekdays from Noon – 3:00 p.m. ET.

Colin Cowherd” to the program lineup at WJBR-AM, Tampa “Florida Alumni Radio 1010AM” in the 112:00 noon to 3:00 pm daypart. The program will also be simulcast on 92.1 FM in Hillsborough County, 103.1 FM in Pinellas County, and 104.7 HD2. The show will air weekdays from Noon – 3:00 p.m. ET. 6:00 pm to 7:00 pm, beginning March 9. The station says, “Craig is a veteran talk show host having previously worked at WGN Radio and 93 WIBC. He is also a frequent guest host with Radio America’s ‘The Dana Show’ and ‘The Chad Benson Show’ and WIBC’s ‘Tony Katz and the Morning News.’” Colling says, “How awesome is it to be at the same radio station I remember reading about in The Way Things Ought To Be! I can’t wait to get started. A big thank you to Bonny, Russell and the entire team at KSEV!”

6:00 pm to 7:00 pm, beginning March 9. The station says, “Craig is a veteran talk show host having previously worked at WGN Radio and 93 WIBC. He is also a frequent guest host with Radio America’s ‘The Dana Show’ and ‘The Chad Benson Show’ and WIBC’s ‘Tony Katz and the Morning News.’” Colling says, “How awesome is it to be at the same radio station I remember reading about in The Way Things Ought To Be! I can’t wait to get started. A big thank you to Bonny, Russell and the entire team at KSEV!” According to the study, 22.07% (2366 stations) had women holding the general manager position in 2025. This is a slight increase from last year where the number was 21.67% and compared to 2004 continues to show solid growth when the percentage of female general managers was only 14.9%. MIW says, “Overall, the best management opportunities for women in radio continues to be in sales management. 35.31% (3561 stations) had a woman sales manager in 2025 which is basically flat from 35.67% in 2024. The greatest challenge for women in radio management continues to be in the area of program directors/brand managers. Women currently program 13.02% (289 stations) which is a slight gain from 12.38% in 2024. MIW board president Sheila Kirby comments, “Twenty-five years of data give us clarity. We are encouraged to see movement in general manager and programming roles, particularly within the Top 100 markets. At the same time, flat growth in sales leadership and the continued underrepresentation of women in programming nationally remind us that progress is not automatic. Sustainable advancement requires intention. MIW remains committed to mentoring, advocating, and creating pathways for women to lead at every level of the industry.”

According to the study, 22.07% (2366 stations) had women holding the general manager position in 2025. This is a slight increase from last year where the number was 21.67% and compared to 2004 continues to show solid growth when the percentage of female general managers was only 14.9%. MIW says, “Overall, the best management opportunities for women in radio continues to be in sales management. 35.31% (3561 stations) had a woman sales manager in 2025 which is basically flat from 35.67% in 2024. The greatest challenge for women in radio management continues to be in the area of program directors/brand managers. Women currently program 13.02% (289 stations) which is a slight gain from 12.38% in 2024. MIW board president Sheila Kirby comments, “Twenty-five years of data give us clarity. We are encouraged to see movement in general manager and programming roles, particularly within the Top 100 markets. At the same time, flat growth in sales leadership and the continued underrepresentation of women in programming nationally remind us that progress is not automatic. Sustainable advancement requires intention. MIW remains committed to mentoring, advocating, and creating pathways for women to lead at every level of the industry.” Indiana Wesleyan University, effective July 1. SRN VP/news Tom Tradup says, “Greg is the consummate professional whose solid coverage will be sorely missed by our Washington Bureau and by our listeners nationwide.” Tradup says Clugston served on the White House Travel Pool and often traveled on Air Force One covering President Trump for SRN News.

Indiana Wesleyan University, effective July 1. SRN VP/news Tom Tradup says, “Greg is the consummate professional whose solid coverage will be sorely missed by our Washington Bureau and by our listeners nationwide.” Tradup says Clugston served on the White House Travel Pool and often traveled on Air Force One covering President Trump for SRN News.